Measuring Throughput Performance: DNS vs. TCP Anycast Routing

Post Author:

CacheFly Team

Categories:

Date Posted:

July 11, 2014

Follow Us:

Since 2002, when we pioneered the first TCP-anycast CDN, CacheFly has always used throughput and availability as the two metrics that drive us as a company.

Many CDNs rely on DNS-based routing methods; however, there are several differences between the two , which directly translates to throughput, the real indicator of a CDN’s performance as well as availability. Since customers frequently ask us what the differences are between the technologies, here’s a quick overview discussing the benefits of TCP-anycast routing over DNS.

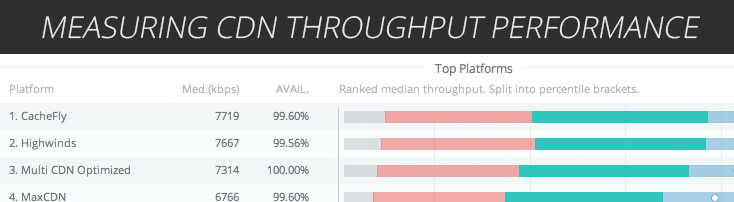

Cedexis measures highest average CDN throughput performance of a 100kb file in the U.S. in April, 2014.

Traditional DNS CDN

DNS-based routing is known as the ‘traditional’, or old-school way of doing global traffic management. DNS routing works by locating the customer’s DNS server and trying to make an informed decision on where the DNS server is located, and which CDN location is closest to that DNS server, and returns that IP address. This operated under the assumption that the physical location, as well as network topology, of the DNS server is a good approximation of both of those values for the actual client behind the DNS server. This is a big leap of faith* (especially in the age of services like OpenDNS and Google Public carrying a significant amount of the worlds DNS traffic). As an example, a certain DSL providers maintains their DNS infrastructure in Atlanta, yet almost 60% of their subscribers are in Southern California – this results in 100% of the traffic behind those DNS servers being served from Atlanta. Not good.

* The edns-client-subnet extension (which CacheFly supports and uses with OpenDNS and Google, among others) ‘fixes’ this problem, however, the DNS based CDNs are struggling with the transition as their systems were designed to map nameservers to POPs, and the edns-client-subnet solution effectively requires them to now be able to map the entire routing table, which is a much bigger challenge to properly monitor performance/availability on a prefix-by-prefix basis in real-time.

More importantly, availability and failover is a large challenge when using a DNS solution, as the TTL of the response must be reached to change locations, and even then, some clients cache the first response and users have to actually restart their browser or client to get a new IP if a POP goes offline (assuming the CDN is even aware that it became unreachable). This can be mitigated by choosing a low TTL which many providers do; however, in turn, this delays performance as resolvers must frequently re-request the same DNS record, delaying the first connection for hostnames that should be in the resolvers cache.

TCP-Anycast CDN

Our TCP-anycast method leverages the best of both worlds – using both DNS and the actual core routing table of the Internet (BGP) to intelligently take client requests and serve from where the *client* is located on the Internet and lets the providers internal metrics find the topographically closest CDN server. This is a huge win for both performance and availability. With anycast, the actual IP address of endpoints never changes, which means we can use a high TTL to ensure a great end-user experience by letting resolvers cache a response. In the event of a provider outage, or if we need to take a POP offline for maintenance, traffic is seamlessly routed to the next best location, without requiring a browser restart, and with a rapid convergence time that’s simply not possible with DNS solutions.

Evaluating CDNs? Look for throughput.

Many of our customers use CacheFly to deliver larger files: videos, apps, games, software downloads; our throughput performance makes it a no brainer to use CacheFly. What most people don’t realize, is throughput is *as important* for small object/web page delivery. When researching web performance it’s easy to be convinced that response time or time-to-first-byte (TTFB) is the metric that you need to optimize for. Those same articles and so-called experts will also tell you it’s extremely important to enable browser side caching, so that your clients don’t have to make a 304 request back to the CDN.

Here’s the thing.. measuring ‘response time’ or TTFB, is simply measuring the performance of 304 responses (headers without content). These are the very requests you just eliminated with client-side browser caching!

So, if you’re not re-requesting content from the CDN, you want that first request (200 response) to complete as fast as possible. That’s time-to-last byte (TTLB) – That’s throughput!

Start optimizing your static objects for time-to-last-byte and your site will load faster, period.

So..Why do people still focus on response time?

First, it’s still a huge factor in loading dynamic, server-generated content where the payload is small and the client spends most of the time waiting for the response to be generated.

However, for large, static content, the TTFB is a small percentage of the overall request – the client spends most of the time actually downloading the object.

Using latency or response time to estimate TTLB/throughput is a pretty good idea – when you don’t have a way to measure throughput. And for most of the 2000’s, people didn’t have a way to measure this in the real world, so TTFB was as good a metric as any.

However, with the advent of RUM (real user monitoring) measurements from companies like New Relic of page render time, and companies like Cedexis and CloudHarmony actually measuring and reporting on real-world throughput, there’s no reason to use TTFB to makes guesses as to how fast the page will load, you can actually choose the fastest provider based on throughput.

Whether you’re looking for a CDN or are already using one, make sure you’re optimizing for throughput. I encourage you to take advantage of our free test account and experience the CacheFly difference for yourself.

Product Updates

Explore our latest updates and enhancements for an unmatched CDN experience.

Request a Demo

Free Developer Account

Unlock CacheFly’s unparalleled performance, security, and scalability by signing up for a free all-access developer account today.

CacheFly in the News

Learn About

Work at CacheFly

We’re positioned to scale and want to work with people who are excited about making the internet run faster and reach farther. Ready for your next big adventure?